Key takeaway: A sentiment analysis of 60 technology-focused papers submitted to the Social Issues in Management division for the 2026 Academy of Management annual meeting in Philadelphia reveals a field caught between enthusiasm for AI’s potential and deep unease about its consequences. The cautionary voices outnumbered positive ones two to one.

Given all the focus on AI and other emergent technologies, the curious George in me wanted to explore how our emerging research views these changes. For the like-minded, here is a summary of what I did and found.

I conducted a quick-and-dirty sentiment analysis of this year’s submissions to the SIM division. Of the 400 papers submitted to the SIM, 60 engage substantively with technology as a central object of analysis. Artificial intelligence and machine learning (42 papers) account for the overwhelming majority, followed by digitalization and digital transformation (15), algorithmic systems (10), and platform and gig economy dynamics (3). This, in itself, suggests a significant increase in curiosity about AI.

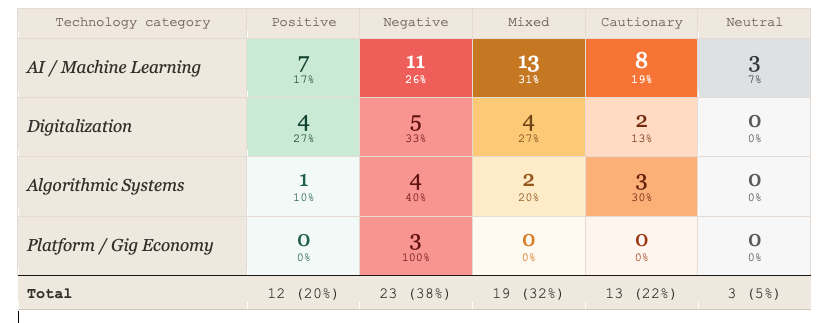

Figure 1· Sentiment toward technology by research category.

The figure below cross-tabulates technology category against sentiment orientation for all 60 papers. Each cell shows the number of papers and the share within that technology’s row. Color intensity tracks volume within each sentiment column — darker shading marks the highest concentration within that sentiment type.

The heatmap makes three things immediately visible. First, as artificial intelligence has moved from peripheral novelty to central preoccupation, the tone of scholarly inquiry has shifted noticeably. The dominant scholarly strand is now one of structured concern; not rejection, but a wary insistence that the gains are conditional, the risks underacknowledged, and the governance lagging badly behind.

Second, mixed sentiment is the single largest category in AI/ML papers. It is a literature that simultaneously recognizes AI’s potential and argues its perils. Third, a vast majority of the scholars recognize governance challenges associated with algorithmic systems. Third, scholars also seem cognizant of precarity, mental health risk, and control as core harms associated with the platform and gig economy.

The Responsible AI Convergence

SIM scholars are naturally concerned with responsibility and accountability, with terms like “responsible AI,” “algorithmic legitimacy,” “ethics governance,” and “accountability gaps” frequently appearing in these submissions across a variety of empirical contexts, including Indigenous tourism, corporate board oversight, and gig workers’ mental health. This convergence suggests the field is building cumulative knowledge, though several papers in the corpus note that the literature has clustered heavily around Global North and large-firm contexts. This leaves significant empirical gaps in perspectives from SMEs and developing economies.

Where Optimism Lives

Positive sentiment is concentrated in specific domains. Papers examining AI for sustainability and the work on managerial AI orientation and carbon performance frame AI as a genuine tool for environmental progress, provided it is embedded in the right strategic context. Similarly, the experiment on generative AI for CSR initiative design finds real quality improvements from AI assistance, and the machine learning approach to predicting CSR decoupling demonstrates that AI can serve as a powerful accountability instrument for stakeholders. What these positive papers share is a conditional framing: AI can contribute, given appropriate governance, when sustainability is already embedded in organizational strategy. The optimism is instrumental and bounded, not categorical.

Where Alam Bells ring

If the AI/ML literature is ambivalent, the algorithmic systems literature is largely alarmed. The conceptual paper framing a choice between an “Algorithmic Leviathan” and a “Digital Agora” captures the field’s central anxiety: that algorithmic systems are accreting power faster than the governance architecture required to legitimate that power is being built. Studies relating to algorithmic systems recommend caution. Some scholars are worried about their negative ramifications. These concerns are driven by findings on the algorithmic amplification of political polarization, privacy violations in AI-monitored workplaces, and the democratic accountability deficits of algorithm-governed commons.

Platform and gig economy scholarship offers perhaps the sharpest negative signal in the corpus, with not a single paper framing platform technologies positively. These studies document elevated anxiety, burnout, and precarity. The analysis of gig work finds that non-workers systematically underestimate platform-driven precarity.

The digitalization literature adds a subtler warning about decoupling. Scholars document a pattern in which digital transformation talk, including CEO rhetoric, ESG disclosures, and sustainability communications, runs well ahead of substantive changes. For evaluators, this suggests that digital self-reporting may require more urgent triangulation with operational evidence than in other domains.

Implications for Practice and Evaluation

For organizations deploying AI and for evaluators tasked with assessing those deployments, the corpus carries a clear message. The adoption of these technologies is not self-evidently beneficial. AI-enabled programs should increasingly be expected to address algorithmic fairness and accountability as primary evaluation criteria, not afterthoughts. This will also require changes in governance systems from board-level AI ethics oversight and HRM frameworks for algorithmic legitimacy to stakeholder engagement in AI design.

What do you all think? Do you have other questions that you want to know about this year’s program? Let me know, and I’ll try finding it for you!

Leave a Reply